The AI Proctoring Dilemma: Balancing Accuracy with Fairness

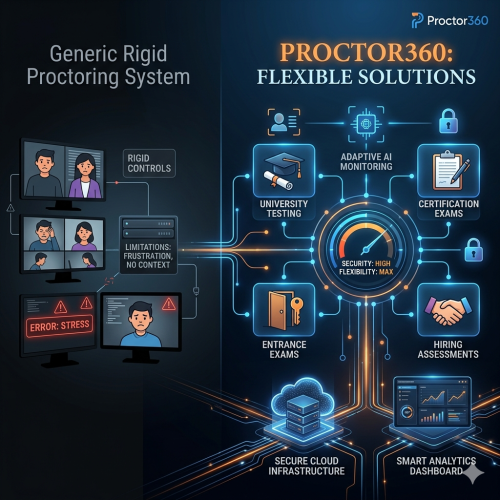

Are you grappling with the tension between leveraging artificial intelligence for exam security and ensuring fair, accurate assessments for every student? This is a daily challenge for modern assessment companies and educational institutions.

While AI proctoring offers incredible scalability and efficiency, it often carries a heavy burden: false positives. These incorrect flags can erode trust, overwhelm administrative teams, and ultimately undermine the integrity they were meant to protect. This article explores the true cost of false flags and how a Hybrid AI + Human model provides the gold-standard solution.

The True Cost of False Positives: Statistics That Matter

The problem of inaccurate AI flags is widespread. Reports from the proctoring industry indicate that some pure AI detection tools can generate false positives at rates as high as 30% to 50%.

- Operational Strain: Even a modest 4% false positive rate—when applied across millions of scans—translates into a massive volume of incorrect flags that human teams must manually resolve.

- The Human Toll: False accusations cause significant stress, particularly for vulnerable groups. This includes neurodiverse students, non-native speakers, and those with disabilities whose natural behaviors (like fidgeting or eye movement) may be misinterpreted by an algorithm.

- Institutional Reputation: Frequent errors damage student trust and create an administrative "review debt" that can take weeks to clear.

Why Pure AI Proctoring Falls Short

Technical Limitations

Pure AI systems lack contextual understanding. They are programmed to detect patterns but cannot "read the room."

- Environmental Triggers: Poor lighting, a pet entering the frame, or a sudden noise can trigger a flag.

- Natural Human Behavior: A student muttering while problem-solving or looking away to think is often flagged as suspicious by a rigid algorithm.

Ethical Considerations

- Algorithmic Bias: There is a documented risk that AI may inadvertently penalize certain demographic groups due to training data discrepancies.

- Privacy Concerns: Continuous biometric monitoring raises questions about surveillance and data retention, often making students feel their privacy has been invaded.

- The Empathy Gap: An algorithm cannot weigh extenuating circumstances or offer a compassionate assessment in borderline cases.

The Hybrid Solution: AI + Human Proctoring

The most effective strategy to overcome these limitations is to combine AI's scalability with human intelligence.

How the Hybrid Model Works

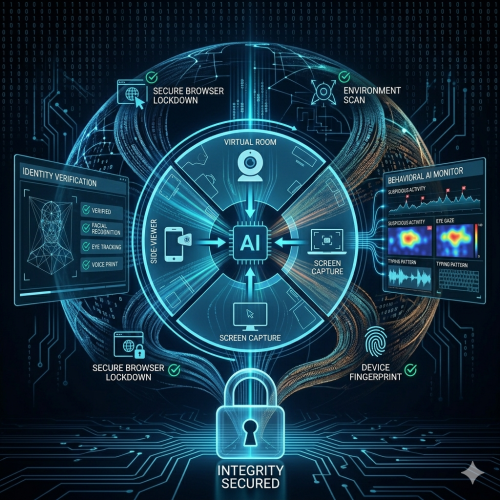

- AI as the First Responder: The AI monitors the session in real-time, identifying potential irregularities at scale.

- Human as the Final Judge: Instead of an automatic "fail" or flag, the AI escalates the incident to a trained human proctor.

- Contextual Review: The proctor reviews the footage to determine if the behavior was a genuine breach or a harmless action (like a student stretching).

Proven Results: Advanced hybrid systems consistently achieve false positive rates below 5%, reducing erroneous flags by as much as 40% compared to traditional methods.

Practical Implementation for Organizations

Step 1: Establish a Baseline

Analyze your current cheating detection accuracy and false positive rates. Identify where your workflow—from onboarding to incident review—can best integrate a hybrid layer.

Step 2: Technology Integration

Ensure your solution offers robust API integration with your LMS (Canvas, Moodle, etc.). Use Custom Rule Configuration to tailor AI sensitivity to your specific exam policies.

Step 3: Process Optimization

Establish clear escalation protocols. Define exactly when an incident should move from a proctor to an academic integrity committee. Implement Continuous Quality Assurance by auditing a sample of both flagged and cleared sessions.

Global Compliance and Future Trends

| Region | Primary Focus | Key Regulation |

| North America | Student Data Privacy | FERPA |

| Europe | Data Sovereignty | GDPR |

| Middle East/Asia | Scalability & Cultural Context | Local Privacy Laws |

The Path Forward

The automated proctoring market is projected to grow at a CAGR of 18.7% through 2033. Future trends include:

- LLM-Based Contextual Analysis: Using large language models to better "understand" the context of a student's environment.

- Advanced Behavioral Biometrics: Analyzing typing cadence or mouse movements for non-intrusive identity verification.

- Blockchain Integration: Creating immutable exam logs for absolute transparency.

Conclusion

The challenge of AI false positives is a hurdle, but not a dead end. By combining the scalability of AI with the nuanced judgment of human proctors, institutions can protect student well-being while upholding the highest standards of integrity.

Explore how Proctor360’s hybrid solution can transform your assessment integrity today. Schedule a demo: https://meetings.hubspot.com/calvin-rimes/intro-call-with-proctor360-1